You could be the star of a porno without even knowing it as the result of an alarming new trend that has raised legal and ethical concerns in the U.S.

Deepfakes, named after the Redditor who first started face-swapping celebrity faces onto porn performers’ bodies, are becoming more popular with the rise of user-friendly applications like FakeApp, which allows people to create their own realistic face-swapped videos using machine learning and artificial intelligence.

Despite public backlash over the technology’s ethical and legal implications, current U.S cyber law might make it difficult for people to protect against its use.

Putting people’s likenesses in pornography when they did not agree to it is called “involuntary pornography,” wrote Alex Halavais, former UB professor and author of the book "Cyberporn and Society," in an email interview with The Spectrum.

“You should not take pictures of people without their permission,” Halavais said. “You certainly shouldn't alter those images. You definitely shouldn't share them –– altered or not –– without the permission of the person involved. This is deeply unethical. It is absolutely a form of harassment. As in most sexual matters, consent is essential.”

Redditor “Dangero” was excited about the technological potential of deepfakes, only to be disappointed upon realizing much of the community was using it primarily to create digitally altered sex videos of celebrities.

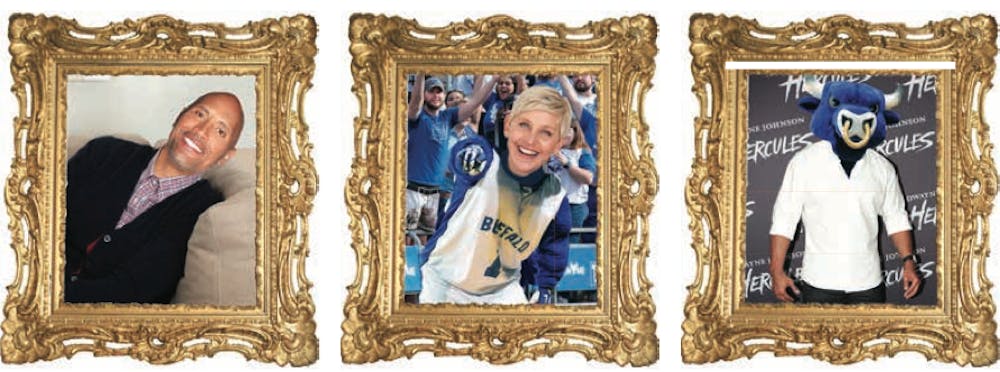

Because of this, Dangero decided to create the subreddit r/deepfakessfw as a PG comedy-oriented alternative to the now-banned r/deepfakes group. The r/deepfakessfw community now boasts 1,093 members and content such as iconic scenes from “The Titanic” that feature Nicolas Cage’s face on Kate Winslet’s body, and Nicolas Cage’s face on the body of “The Lord of the Rings” character Smeagol.

But outside of Dangero’s efforts, many people create deepfake not to generate laughs but to create non-consensual sexual content of celebrities and non-celebrities alike. Celebrities include Emma Watson, Gal Gadot and Taylor Swift, among many others.

The process of creating deepfakes is still relatively time-consuming, but in the future it will be as simple as supplying a software with a collection of photographs or a link to an Instagram profile, according to Dangero.

In an interview with Motherboard, Deepfakes, the redditor, said he just found a clever way of doing face-swaps. He explained the technology by saying he supplied the software with Google image searches, stock photos, and Youtube videos of individuals to train the deep-learning algorithm to manipulate videos on the fly.

In his case, he trained the algorithm to manipulate porn videos and “Wonder Woman” star Gal Gadot’s face.

Public opinion aside, current revenge porn law is not up to speed with this technology, said UB law professor and cyber law expert Mark Bartholomew.

Revenge porn laws don’t cover this kind of digitally-swapped content because the body in the video does not actually belong to the person who is being depicted, Bartholomew said.

The laws against revenge porn are covered under the privacy rationale, which protects “intimate facts” about an individual, Bartholomew said.

In the case of deepfakes, the images are not covered under the privacy rationale because the body and face don’t belong to the same person.

There are also other loopholes which could prevent legal action against the makers of pornographic deepfakes. One is the difficulty of proving ill-intent on the creator’s end and the other stems from the First Amendment right to free speech, which allows citizens to make commentary on public figures, according to Bartholomew.

Some websites, including Twitter, Pornhub and Reddit, aren’t waiting for the laws to catch up and have already pushed back against deepfakes, banning salacious deepfakes from their platforms in the past week, according to Motherboard.

Emily Walker, a senior computer science major, said she sees deepfakes as a type of defamation because it presents a potentially harmful image of a person without their consent.

“I think as a gut reaction it’s good that these companies are saying we should shut this down and eventually the law will maybe catch up,” Walker said.

Some students feel it’s not the responsibility of these platforms to regulate this kind of content.

Laura Flores, a sophomore health and human services major, said she thinks it’s more the responsibility of the individual to not post pictures of themselves online to the public.

“It would be hard to regulate, so you can’t blame social media platforms for your face being stuck in porn video,” Flores said. “Because how many people use Twitter? Millions.”

Jeffrey Ye, a senior computer engineering major, said he has mixed feelings on whether platforms should regulate deepfakes.

“These social media platforms can pick and choose what to leave and remove depending on their personal agendas,” Ye said.

Despite backlash from social media platforms, Halavais said deepfakes are only getting started, and he is not surprised they first surfaced in pornography.

“Pretty much every new communication technology is used for erotic or pornographic content very early on,” Halavais said. “Books, film movies, the instant camera, the home video recorders — all of these found their initial market in pornography.”

Haruka Kosugi is an assistant news editor and can be reached at haruka.kosugi@ubspectrum.com and on Twitter at @kosugispec